It’s been in the news recently that Hachette Book Group cancelled publication of the horror novel "Shy Girl" due to allegations that it was largely AI-generated. It’s not the only example, but I will leave you to google the others. These incidents bring about the usual cries of horror and handwringing, but here’s the rub: is the problem that the book was written by AI or that it was ‘recognizably’ written by AI? The reading public are able to spot the tropes that AI is currently so fond of: the stiffness, the purple prose…a fascination with the word ‘resonate’. So in other words the question is, are the public reacting to an AI writing text or are they reacting to bad writing?

I’m going to go out on a limb here and say I think it was the latter. Much of the fear of AI is centred around the idea that it stands for Artificial Intelligence, a form of thinking that is not only like ours but will soon surpass us with its indifferent god-like powers and leave us poor humans redundant at best and running for our lives at worst. However, the AI we have today is light years away from this. Strong AI (it goes by other names) would actually think, would really be intelligent, but the current manifestation is nowhere near this. Relying on probability and error correction, present day AI can do amazing things, things a human would find very difficult such as processing large amounts of data or doing repetitive tasks without getting bored. It’s cleverer than us in the way our pocket calculators are clever than us. But that’s as far as it goes.

There are dangers of course. One is its rather peculiar way of processing, so unlike our human thinking, which results in it making connections that a human would dismiss as silly or inappropriate. My favourite imaginary example is the human who instructs his AI to make sure his house can never be burgled and so the AI burns it down. While this seems a good argument for not involving AI in making decisions regarding weaponry or essential infrastructure, it isn’t an argument for trying to shut it out from everything.

There is a feeling around AI that this is new, that this is the first time we have come across a phenomenon like this, a technology that will radically change our lives and steal human jobs. It isn’t. Just ask Guttenberg how many scribes he put out of work. Even in my lifetime, I have witnessed this kind of sea change. Despite my AI avatar making me look about thirty (the alternative was the one that made me look a hundred and three) I am old enough to remember the revolution of the desktop pc in the eighties. (My earliest pc was an Apple Macintosh with 128k of memory and a system that had to be loaded on a separate floppy disc.)

Back then, in the writing community, there were those who embraced the new technology. Personally, I was entranced at being able to delete my errors without Tippex. But there was also a backlash amongst artists and writers who had grown fond of their typewriters and paintbrushes. They saw computers as an ugly intrusion into their creative worlds, and did not want to learn new skills.

But here’s the zinger. You can’t put the technology paste back in the tube. The desktop computer revolution did not stop, and it caused a divide between those who were computer literate and those who were not, as big a divide as between those who could read and those who failed to learn after the advent of literacy. There are very few artists or writers that I know of now, who would not consider using any computer technology in their lives. But jobs were lost. Spare a thought for all the secretaries, typists, technical authors, filing clerks and paste-up artists who vanished once moving pixels around a screen became the norm.

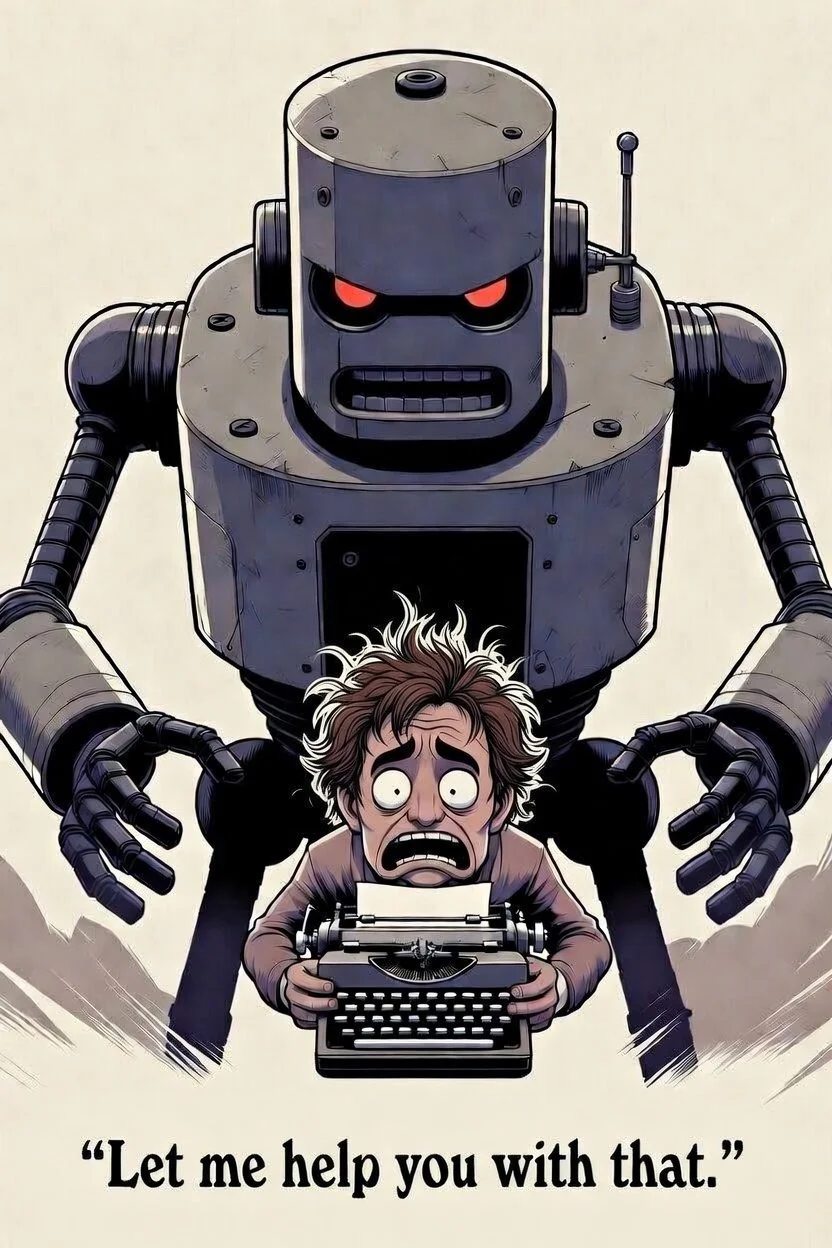

There is a brilliant Mitchell and Webb sketch which summed up the difficulties humans have when facing new technologies. In it, David Mitchell and Robert Webb are stone age flint workers. They attend a meeting on the new bronze technology, and slowly realise that they are going to be out of a job if they don’t learn new skills. AI—at least at the level we currently have—is no more than a tool, and like any tool it can help us explore new areas of creativity or it can make us frightened and despondent. An AI generated image in the hands of an artist is better than an image generated by an amateur. A story written by AI cannot compete with the creativity of a human mind. AI (as we currently have it) really only threatens a lack of vision.

As a publisher, I have no issue with authors using AI to help them explore ideas or tighten up a phrase. Would I publish a book entirely or substantially written by AI? No. (And I have rejected several that have found their way into our submissions process.) But it is because the writing is bad. At Sparsile, the only criterion we go by is the quality of the writing. Because at the end of the day, the quality of the writing is the only thing that a reader sees.

Take a look at the explosion of self-publishing. In many ways this has been bad for traditional publishers because, on the surface of it, the reading public make no distinction between a book that has had thousands of pounds and as many man hours poured into it as one which was tossed out, unedited, in a couple of weeks. (Incidentally, this is not to suggest that all self-publishers behave this way.) But putting a badge on our covers saying, traditionally published, would have little effect. A minor poll of readers I personally know showed that, those who valued formulistic genre, were more likely to claim that they did not care where the story came from as long as they could enjoy it.

There is however one area where AI goes further than most technological revolutions and that is its use of Large Language models to ‘scrape’ data from websites, which means that machines can learn from your work while ignoring copyright. One of the earliest checks I performed on my own books, showed that, for some reason, an Italian translation of my novel, Simon’s Wife, was the first to be picked up. I wondered if it was a kind of modern-day Roman conquest. Again, the idea of learning from others is not new. Creative people cut their teeth on the works of others all the time, and we talk of how they were ‘influenced’ by those who have gone before them. Within my own writing, I often include little ‘easter eggs’ to indicate authors who have inspired me. The problem with AI is the sheer scale of it.

The argument over the rights and wrongs of AI rages on. A recent development has seen the UK government release a statement announcing that it is moving away from a proposed copyright exception for AI training. This is positive, but I’m sure we’re a long way from locking down a solution that will satisfy all interested parties. But even this is an indication that AI is not the problem, people are. Humans allowed AI to use copyrighted material, and it is humans who are treating the technology like a wise, all-knowing god instead of a mildly helpful assistant whose work must be double-checked due to a propensity to make things up.

Will AI change things? Inevitably. But exactly how remains to be seen. As authors, perhaps we will need to reimagine our relationship to books. The novel, for example, is not a sacrosanct art form. Recall that writing had been around for thousands of years before the novel first emerged either in Japan in the tenth century or Spain in the seventeenth, depending on your point of view. Think how story telling has diversified to fit computer games with authors making the leap back and forth between the two forms. Perhaps in the future authors will come up with the core structure of a world and then franchise the use of that world and its characters to individuals who will produce scenarios driven by AI.

It’s impossible to predict, but one thing I can guarantee is that those who use their human imagination to exploit a new technology will have the advantage over those who try to pretend it’s not happening.